- Blog

- AI Video Generation in 2026: 5 Trends That Are Changing Everything for Creators

AI Video Generation in 2026: 5 Trends That Are Changing Everything for Creators

The AI video generation market is projected to reach $847 million in 2026, growing to $3.35 billion by 2034. But that number only tells part of the story. The real shift is not financial — it is creative.

According to the Artlist AI Trend Report 2026, surveying 6,500 creators across 140 countries, 87% of creative professionals now use AI tools for video creation. Two-thirds use them weekly. Teams report producing 5-10x more content with the same resources.

For anyone creating video content — whether for marketing, education, entertainment, or social media — the question is no longer whether AI video tools will reshape your workflow. It is which trends will define the next era, and how to position yourself ahead of them.

Here are five trends reshaping AI video generation in 2026 — and what each one means for you.

Trend 1: Professional Video Is No Longer a Budget Game

For decades, quality video required serious investment: cameras, lights, studios, editors, and post-production suites. A polished 30-second product video could cost $2,000-$10,000.

AI video generators have collapsed that equation. Tools like Seedance 2.0, Sora 2, and Veo 3.1 now produce 2K-resolution clips with realistic physics, cinematic camera movements, and synchronized audio — often for under a dollar per clip.

As Forbes reports, ByteDance's Seedance 2.0 "nails real-world physics and hyper-real outputs," producing results that blur the line between AI-generated and traditionally filmed content.

The implication is significant. As Artlist's VP of Content Orit Bar Niv puts it: "When the barrier to creating polished work is zero, the barrier to being memorable is higher than ever." In other words, production quality alone no longer differentiates content. Strategy, originality, and creative vision do.

What this means for you: Stop treating video production as a premium expense. Reallocate budget from production to strategy and distribution. The cost of creating a professional video clip has dropped from thousands of dollars to cents — use that savings to create more content, test more ideas, and iterate faster.

Trend 2: Multi-Modal Input Is Becoming the Standard

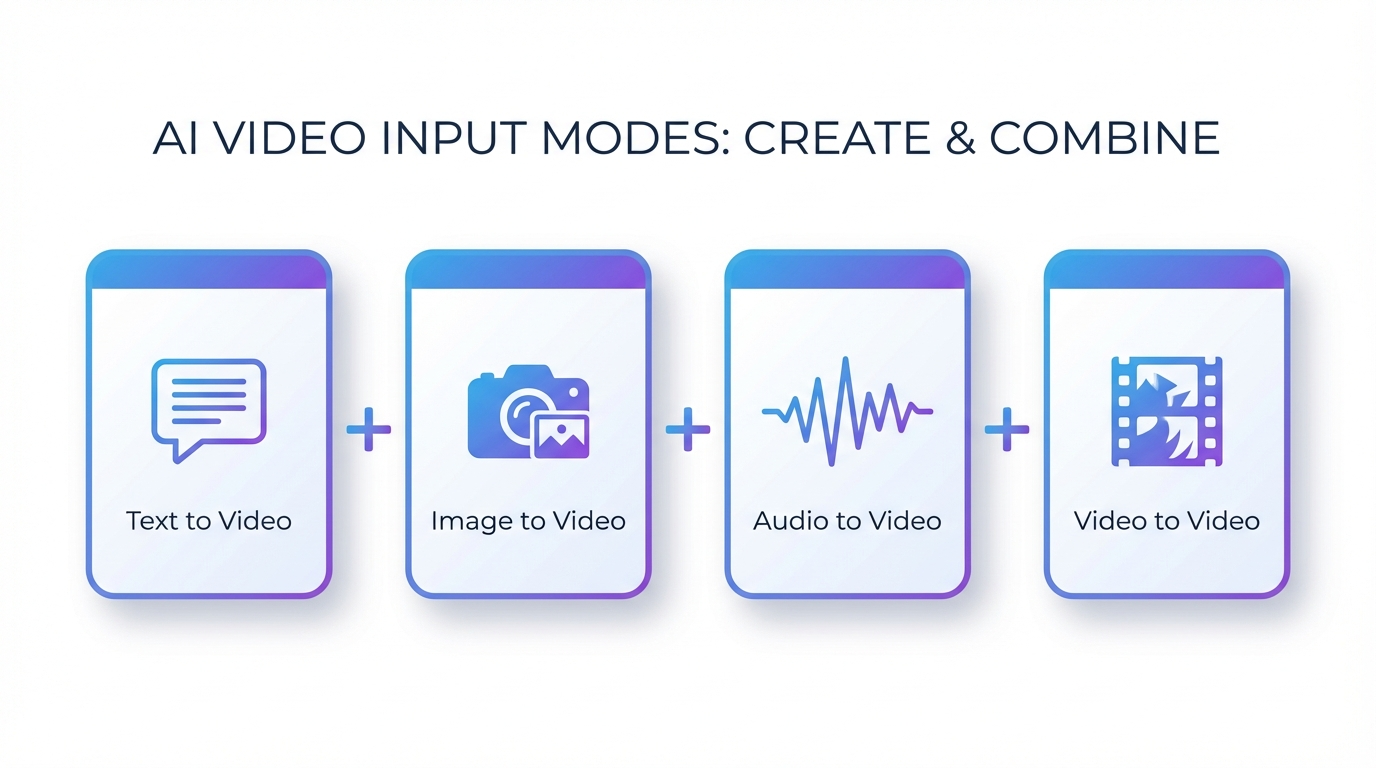

Early AI video tools offered a single input: type a text prompt, get a video. That era is ending.

The most capable tools in 2026 accept multiple input types simultaneously. You can feed in a reference image, add a text description of the action you want, specify the audio mood, and let the model combine everything into a cohesive clip.

Seedance 2.0 was among the first to formalize this as "quad-modal input" — accepting text, images, video clips, and audio as starting points, either individually or combined. This matters because real creative work rarely starts from a blank text box. You have brand photos, existing footage, voiceover recordings, and music tracks. Multi-modal input means AI video tools can now work with what you already have, rather than demanding you describe everything from scratch.

Grand View Research estimates the AI video generator market will grow at a 20.3% CAGR through 2033, with multi-modal capabilities cited as a primary driver of enterprise adoption.

What this means for you: Start building an asset library. Collect reference images, brand videos, music tracks, and voiceover clips that define your visual identity. The better your input materials, the more consistent and on-brand your AI-generated videos will be.

Trend 3: Audio-Aware Generation Changes Everything

Until recently, AI video generation treated audio as an afterthought — you generated a silent clip, then added music or sound effects separately. That workflow is disappearing.

The latest models generate video with full audio awareness. A clip of rain hitting a window includes the sound of raindrops. A person speaking has lip movements synchronized to the audio. A product being placed on a table produces a natural thud.

As Higgsfield AI predicts, 2026 is the year AI video generators stop treating sound as an afterthought and begin "synthesizing audio with full contextual awareness."

This matters enormously for practical applications. Beautifully generated video with mismatched audio feels unfinished and amateur. Native audio sync eliminates an entire post-production step while dramatically improving the perceived quality of the output.

Seedance 2.0's audio-to-video mode goes further: you can provide a voiceover recording or music track as the primary input, and the model generates video that visually matches the audio. For podcast creators, musicians, and educators, this inverts the traditional workflow — audio becomes the creative starting point, with video built to match.

What this means for you: If you have been creating video and audio separately, test integrated generation. Upload a voiceover or music track as your starting input and let the AI build the visuals around it. The time saved in post-production alignment alone can justify the switch.

Trend 4: Prompting Is Replacing Production Skills

The Artlist survey reveals a profound shift in what makes a successful video creator in 2026. The ability to direct AI through natural language — what the report calls the "Age of Taste" — now matters more than technical software skills.

The data supports this: 37% of creators say AI's primary benefit is exploring concepts faster, while 63% now prioritize strategic viability over production quality. Teams are hiring for creative direction and strategic thinking, not just software proficiency.

This is where features like Director Mode become significant. Rather than learning complex camera rigging or motion graphics software, creators describe their vision in natural language: "slow pan left across the skyline at golden hour, then dolly zoom into the cafe window." The AI interprets the creative intent and generates the corresponding camera work.

This does not mean technical knowledge is irrelevant — understanding lighting, composition, and pacing still matters. But the execution layer has shifted from manual production to AI-directed creation. Your creative vocabulary becomes your most valuable production tool.

What this means for you: Invest time in developing your creative vocabulary. Study cinematography terms, learn how camera movements affect emotion, and practice writing detailed scene descriptions. These skills transfer across every AI video tool and will only become more valuable as the technology improves.

Trend 5: From Single Clips to Multi-Scene Storytelling

The earliest AI video tools produced isolated clips — single 4-10 second outputs with no connection to each other. Characters changed appearance between generations. Visual styles varied randomly. Building a coherent narrative was nearly impossible.

That limitation is fading. As FocalML's analysis notes, the most significant evolution in 2026 is the shift toward "multi-scene generation, persistent characters across clips, and story-aware sequencing."

Character consistency — maintaining the same face, clothing, and proportions across multiple generated clips — is the technical breakthrough enabling this shift. Seedance 2.0's @ reference system lets creators tag a character once and maintain their identity across every subsequent scene. This means a brand ambassador, product mascot, or narrative protagonist can appear in dozens of clips while remaining instantly recognizable.

The commercial implications are substantial. Instead of producing individual social media clips in isolation, marketing teams can now generate entire narrative campaigns — multi-episode content series where characters, settings, and visual style remain consistent from the first clip to the last.

What this means for you: Start thinking in sequences, not single clips. Plan multi-scene narratives before generating individual videos. Create character profiles and scene breakdowns the way a filmmaker would — the AI handles execution, but coherent storytelling still requires human planning.

Your 2026 AI Video Strategy

These five trends point in the same direction: the value in video creation is migrating from execution to vision. The tools are getting cheaper, faster, and more capable. The differentiator is no longer who can produce — it is who can think, plan, and create with purpose.

Here is a practical starting point:

- Reallocate production budget. If you are spending $5,000 per video on traditional production, test creating the same content with AI tools at a fraction of the cost. Redirect savings toward strategy, distribution, and testing.

- Build your asset library. Collect brand images, product photos, voiceover recordings, and music that define your visual identity. Multi-modal AI tools work best when they have strong inputs.

- Test audio-first workflows. Try generating video from voiceover or music rather than text. For many use cases — explainers, social content, product demos — this produces more natural results.

- Develop creative direction skills. Study cinematography, learn camera movement terminology, and practice writing detailed scene descriptions. Platforms like SeedanceAI let you experiment with Director Mode and multi-modal generation at low cost.

- Plan multi-scene content. Before generating individual clips, outline your narrative arc. Define characters, settings, and visual style upfront, then generate scenes that build on each other.

The creators who thrive in this landscape will not be the most technically skilled. They will be the most strategically creative — the ones who understand that AI video generation is not replacing storytelling. It is making storytelling accessible to anyone with a vision worth sharing.

The tools are available. The cost barriers are falling. And the window for early adoption — where experimenting with AI video generation gives you a measurable advantage over competitors who have not yet started — is open right now.

Market data from Fortune Business Insights and Grand View Research. Survey data from Artlist AI Trend Report 2026 (6,500 creators, 140 countries). Tool capabilities reflect features as of March 2026.